When Did It Happen? Temporal Moment Localization in Video Question Answering

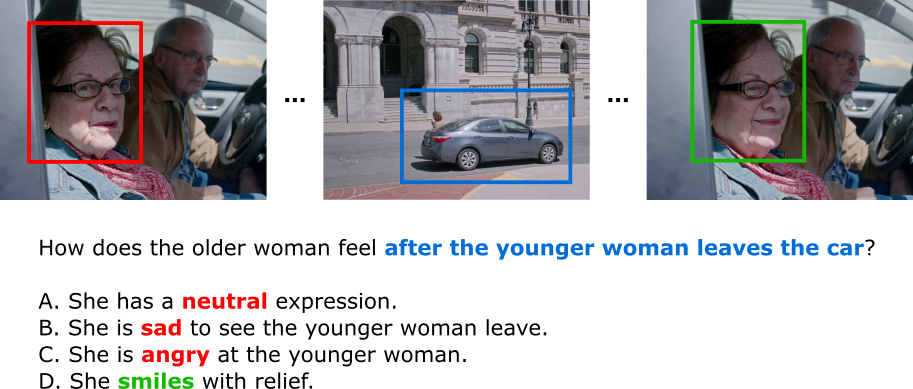

Description: Emotion-LLaMA [1] excels at emotion recognition from short clips, but scenes in the MoMentS dataset [2] are often much longer. This project develops a method to select the most emotionally relevant 2–4 second subclip from longer video segments, to provide the best input to Emotion-LLaMA. By identifying the exact moment tied to a question, the system improves the model's ability to reason about characters' emotional states. The result is sharper, more accurate emotion understanding in video question answering.

Goals:

- Build a model to localize the most relevant short subclip for each emotion question

- Use multimodal cues (visual, audio, subtitle) to detect emotionally rich moments

- Feed only the selected subclip into Emotion-LLaMA for emotion inference

- Evaluate improvements on emotion-related VQA in MoMentS

Supervisor: Victor Oei

Distribution: 10% Literature Review, 70% Implementation, 20% Analysis

Requirements: knowledge in deep learning, computer vision, PyTorch, multimodal processing

Literature:

[1] Cheng et al. (2024). Emotion-llama: Multimodal emotion recognition and reasoning with instruction tuning.

[2] Villa-Cueva et al. (2025). MOMENTS: A Comprehensive Multimodal Benchmark for Theory of Mind.