CLEVR Reasoning of Humans and Machines

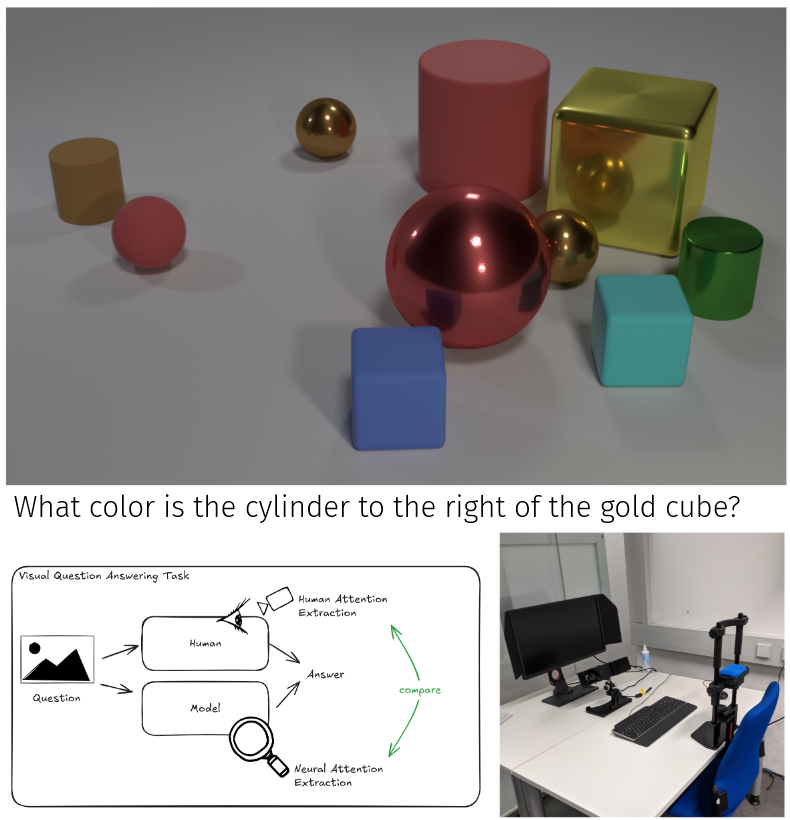

Description: When humans and machines work with each other, understanding each other’s reasoning is important. This includes building common ground by looking at the same key areas (shared attention), recognizing the same concepts there (shared understanding) and drawing similar conclusions (shared reasoning). To investigate how humans and machines perceive, conceptualize and reason over situations, this project utilizes the CLEVR dataset [1], which contains simple compositional language and visual reasoning tasks. Even though modern multimodal large language models can solve CLEVR, they struggle on task variations that are trivial for humans, which implies differences in their internal processing [2]. We will conduct an eye tracking study to collect human gaze and reasoning on CLEVR tasks (similar to [3]), apply explainability methods to extract machine attention and compare them.

Goals:

- design and run a human eye tracking study on CLEVR reasoning tasks

- extract the attention and reasoning process from models and humans

- compare human and machine reasoning using correlation metrics

Supervisor: Fabian Kögel

Distribution: 10% literature review, 70% study design / implementation, 20% analysis

Requirements: high motivation, programming skills in Python and PyTorch, knowledge of how to use huggingface models, ideally experience in user studies / eye tracking

Literature: [1] Johnson, J. et al. 2017. CLEVR: A Diagnostic Dataset for Compositional Language and Elementary Visual Reasoning. CVPR 2017

[2] Verma, A.A. et al. 2024. Evaluating Multimodal Large Language Models across Distribution Shifts and Augmentations. CVPRW 2024

[3] Sood, E. et al. 2021. VQA-MHUG: A Gaze Dataset to Study Multimodal Neural Attention in Visual Question Answering. CoNLL 2021