Adaptive Ad-Hoc Teamwork via Meta-RL

Description: Recent advances in reinforcement learning (RL) have enabled artificial agents to achieve remarkable performance in domains such as Go and Atari, and to power large language models that serve as general-purpose assistants. Yet, despite these successes, current systems struggle to cooperate effectively with humans, particularly when trained only through self-play (Carroll et al., 2019). Existing approaches achieve limited success in simplified coordination tasks using fixed strategies (Strouse et al., 2021; Zhao et al., 2023; Yu et al., 2023; Ruhdorfer et al., 2025a, 2025b), but currently are designed for one-shot interaction: Test with a partner once and then forget they ever existed. In human-human cooperation, however, it is typical to build a model of how the other agent behaves over multiple interactions which is mostly overlooked in current ad-hoc teamwork methods and evaluation protocols. Because of that, agents lack the ability of adapting to diverse behavior over time.

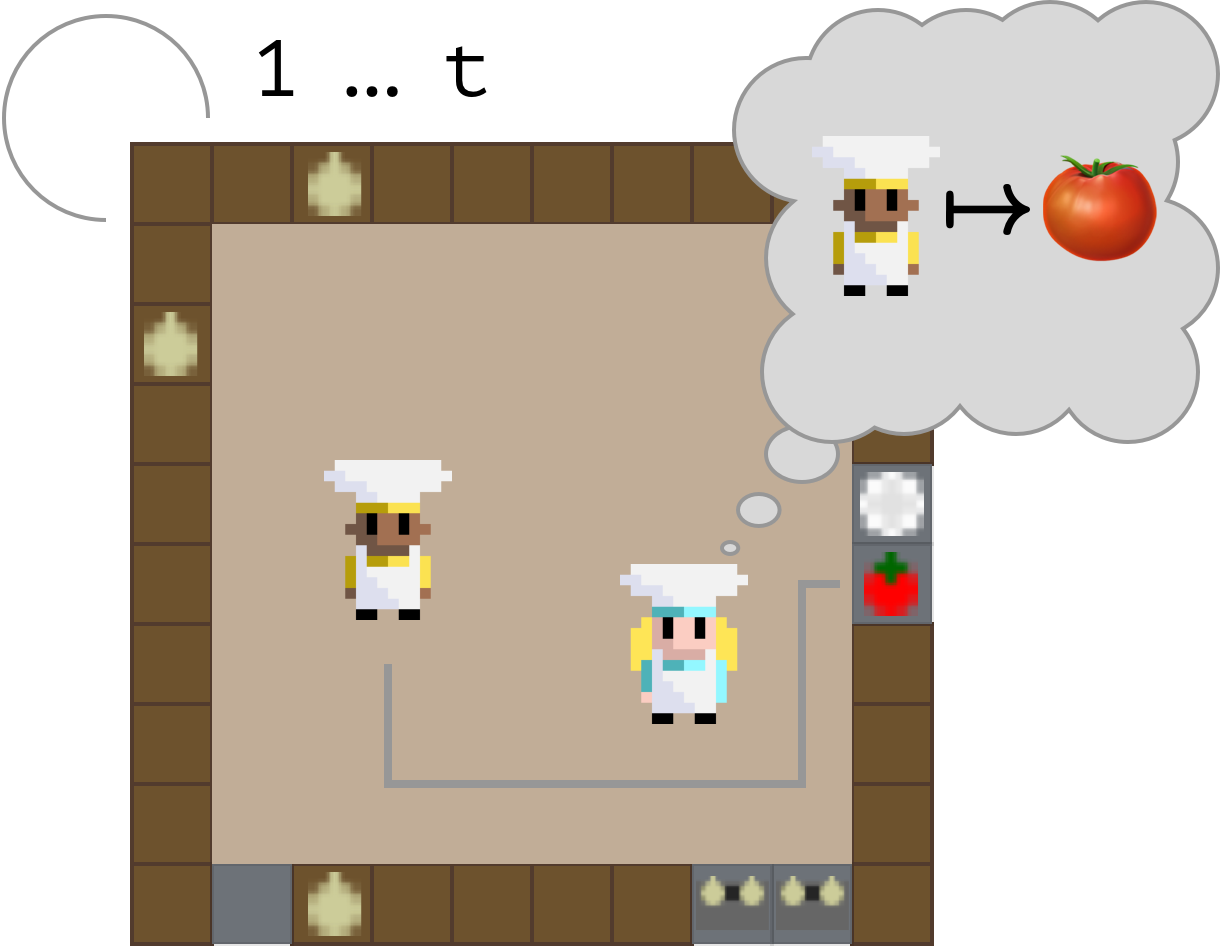

This project aims to advance the foundations of ad-hoc teamwork (Stone et al., 2010) by exploring how agents can learn to learn from unknown partners and adapt dynamically as collaboration unfolds. Building on the Unsupervised Partner Design (UPD) framework (Ruhdorfer et al., 2025b), the thesis will develop AI systems that can anticipate, model, and adjust to a partner’s unique behaviour within a few interactions. This project is in contrast to a large body of work that only evaluated zero-shot teamwork (Strouse et al., 2021; Zhao et al., 2023; Yu et al., 2023; Ruhdorfer et al., 2025a, 2025b).

Methodologically, the project will aim to complete two workpackages:

- Benchmark Development: First the project will develop a new suite of few-shot interaction tasks in which one-shot teamwork has little chance of succeeding, inspired by previous work on the Babys Intuition Benchmark (Gandhi et al., 2021).

- Adaptive Agent: Second, the project will attempt to propose a solution to this problem. Methodologically it will combine ideas from meta-learning in the XLand line of work (Bauer et al., 2023) and Theory-of-Mind-inspired architectures such as ToMNet (Rabinowitz et al., 2018) to enable agents to infer partner goals and preferences in real time. The method will be evaluated in the created benchmark. Optionally, evaluation can also additionally take place in established cooperative benchmarks including for example Overcooked (Carroll et al., 2019), OvercookedV2 (Gessler et al., 2024), the Overcooked Generalisation Challenge (Ruhdorfer et al., 2025a), the Yokai Learning Environment (Ruhdorfer et al., 2025c), and Hanabi (Bard et al., 2020).

Overarching research question:

- Can an agent learn to model their partner from multiple interactions?

Supervisor: Constantin Ruhdorfer

Distribution: 20% literature review, 60% implementation, 20% analysis

Requirements: Good knowledge of deep learning and reinforcement learning, strong programming skills in Python and PyTorch and/or Jax, self management skills. The thesis requires to learn Jax along the way, experience in PyTorch will be sufficient to start.

Literature:

Strouse, D. J., McKee, K., Botvinick, M., Hughes, E., & Everett, R. (2021). Collaborating with humans without human data. Advances in neural information processing systems, 34, 14502-14515.

Zhao, R., Song, J., Yuan, Y., Hu, H., Gao, Y., Wu, Y., ... & Yang, W. (2023, June). Maximum entropy population-based training for zero-shot human-ai coordination. In Proceedings of the AAAI Conference on Artificial Intelligence (Vol. 37, No. 5, pp. 6145-6153).

Yu, C., Gao, J., Liu, W., Xu, B., Tang, H., Yang, J., ... & Wu, Y. (2023). Learning Zero-Shot Cooperation with Humans, Assuming Humans Are Biased. In The Eleventh International Conference on Learning Representations.

Rabinowitz, N., Perbet, F., Song, F., Zhang, C., Eslami, S.M.A. & Botvinick, M.. (2018). Machine Theory of Mind. Proceedings of the 35th International Conference on Machine Learning, in Proceedings of Machine Learning Research.

Gessler, T., Dizdarevic, T., Calinescu, A., Ellis, B., Lupu, A., & Foerster, J. N. (2024). OvercookedV2: Rethinking Overcooked for Zero-Shot Coordination. In The Thirteenth International Conference on Learning Representations.

Bard, N., Foerster, J. N., Chandar, S., Burch, N., Lanctot, M., Song, H. F., ... & Bowling, M. (2020). The hanabi challenge: A new frontier for ai research. Artificial Intelligence, 280, 103216.

Bauer, J., Baumli, K., Behbahani, F., Bhoopchand, A., Bradley-Schmieg, N., Chang, M., ... & Zhang, L. M. (2023, July). Human-timescale adaptation in an open-ended task space. In International Conference on Machine Learning (pp. 1887-1935). PMLR.

Gandhi, K., Stojnic, G., Lake, B. M., & Dillon, M. R. (2021). Baby Intuitions Benchmark (BIB): Discerning the goals, preferences, and actions of others. Advances in neural information processing systems, 34, 9963-9976

Ruhdorfer, C., Bortoletto, M., Penzkofer, A., & Bulling, A. (2025a). The Overcooked Generalisation Challenge: Evaluating Cooperation with Novel Partners in Unknown Environments Using Unsupervised Environment Design. Transactions on Machine Learning Research.

Ruhdorfer, C., Bortoletto, M., Oei, V., Penzkofer, A., & Bulling, A. (2025b). Unsupervised Partner Design Enables Robust Ad-hoc Teamwork.

Ruhdorfer, C., Bortoletto, M., & Bulling, A. (2025c). The Yokai Learning Environment: Tracking Beliefs Over Space and Time. IJCAI ToM 2025.